Can AI make me a musical star?

Simply sign up to the Life & Arts myFT Digest -- delivered directly to your inbox.

Like a turkey dozing off when talk turns to Christmas, I confess to tuning out when talk turns to AI. Or rather I used to, until a few weeks ago. Before then, AI seemed vital and foreboding, yet somehow also remote and incomprehensible. But now my attention is hooked. The difference lies in the no-longer-unique sound of the human voice.

Deepfake vocal clones are here. The technology behind them isn’t new, but rapid advances in accuracy and availability have made AI-generated voice copying go viral this year. Microsoft’s Vall-E software claims to be able to mimic a person based on just three seconds of audio. Although it hasn’t yet been released to the public, others with similarly powerful capabilities are easily obtained.

A flashpoint came in January when tech start-up ElevenLabs released a powerful online vocal generator. Faked voices of celebrities immediately flooded social media. Swifties on TikTok concocted imaginary inspirational messages from Taylor Swift (“Hey it’s Taylor, if you’re having a bad day just know that you are loved”). At the other end of the spectrum, 4chan trolls created fake audio clips of celebrities saying hateful things.

Other voice generators duplicate singing as well as speech. Among the countless mock-ups circulating on social media is a synthetic but convincing-sounding Rihanna covering Beyoncé’s “Cuff It”. Digitally resurrected foes Biggie Smalls and Tupac Shakur make peace in a jointly rapped version of Kanye West and Jay-Z’s “N****s in Paris”. David Guetta made an AI Eminem voice rapping about a “future rave sound” for a live DJ set. Referring to what he called his “Emin-AI-em” creation, he explained afterwards that “obviously I won’t release this commercially”.

In April, a track called “Heart on My Sleeve” became the first voice-clone hit, notching up millions of streams and views. Purportedly made by a mysterious figure called Ghostwriter, it’s a duet featuring AI-generated versions of Canadian superstars Drake and The Weeknd.

The lyrics resemble a bad parody of the pair’s real work. “I got my heart on my sleeve with a knife in my back, what’s with that?” the fake Drake raps, evidently as mystified as the rest of us. But the verisimilitude of the vocals is impressive. So realistic are they that there has been groundless speculation that the whole thing is a wormhole publicity stunt in which the two acts are supposedly pretending to be their AI-created avatars.

“Heart on My Sleeve” was removed from streaming platforms after a complaint from the artists’ label, Universal Music Group, although it’s simple enough to find online. A murky legal haze covers vocal cloning. The sound of a singer’s voice, its timbre, doesn’t have the same protection in law as the words and melodies they’re singing. Their voice might be their prize asset, but its sonic frequency isn’t theirs to copyright. Depending on its use, it appears that I am at liberty to make, or try to make, an AI model of my favourite singer’s inimitable tones.

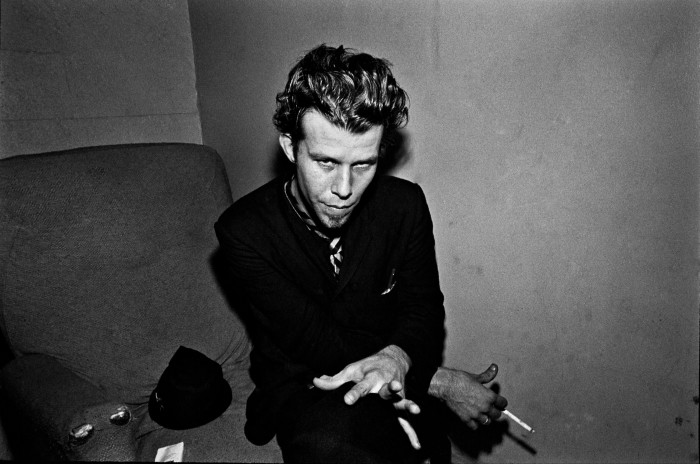

Unlike the famous rappers and pop stars who are the typical targets for cloning, my choice is a vintage act: Tom Waits, a gravelly mainstay of my musical life since my student days.

Now 73, the Californian singer-songwriter released his first album 50 years ago. His songs have been succinctly characterised by his wife and collaborator Kathleen Brennan as either “grim reapers” that clank and snarl and brawl or “grand weepers” that serenade and bawl. Take note, AI Drake and AI The Weeknd, this is real heart-on-sleeve stuff.

Aside from my being a fan, a reason to pick him is his distinctive singing style, a cataract roar to rival Niagara Falls. Another is the frustrating absence of any new music from him: his most recent album came out in 2011. I therefore set myself the challenge of using online generative tools to create a surrogate for the real thing, a new song that will endeavour to put the AI into Tom Waits.

As with any unfamiliar task these days, the first port of call is a YouTube tutorial. There I find a baseball-hatted tech expert from the US, Roberto Nickson, demonstrating the power of voice generators with an uncanny Kanye West impression that went viral at the end of March. He chose the rapper’s voice because he’s a fan, but also as it was the best voice model that he could find at the time.

Set to a Ye-style beat that he found on YouTube, Nickson’s Ye-voiced verses make the rapper seem to apologise for his shocking antisemitic outbursts last year. “I attacked a whole religion all because of my ignorance,” Nickson raps in the vocal guise of Kanye. (In reality, the rapper offered a sorry-not-sorry apology last year in which he said he didn’t regret his comments.)

“When I made that video, these machine-learning models were brand new,” Nickson tells me in a video call, sitting behind a microphone in his filming studio in Charlotte, North Carolina. The 37-year-old is a tech entrepreneur and content creator. He came across the Kanye voice model while browsing a Ye-inspired music-remix forum called Yedits on the internet site Reddit.

“It was a novelty, no one had seen it,” he says of the AI-generated Ye voice. “Like, the tutorial had about 20 views on YouTube. And I looked at it and went, ‘Oh my God.’ The reason I knew it was going to be huge wasn’t just that it was novel and cool, but also because the copyright conversation around it is going to change everything.”

Ethical questions are also raised by voice cloning. Nickson, who isn’t African-American, was criticised online for using a black American voice. “I had a lot of comments calling it digital blackface. I was trying to explain to people, hey look, at the time this was the only good model available.”

Elsewhere on his YouTube channel are guides to making your own celebrity voice. Led by his tutorials, I enrol as a member of an AI hub on Discord, the social-media platform founded by computer gamers. There you can find vocal models and links to the programming tools for processing them.

These tools have abstruse names like “so-vits-svc” and initially look bewildering, though it’s possible to use them without programming experience. The voice models are formulated from a cappella vocals taken from recordings, which are turned into sets of data. It takes several hours of processing to create a convincing musical voice. Modellers refer to this as “training”, as though the vocal clone were a pet.

Amid the Travis Scotts and Bad Bunnies on the Discord hub is a Tom Waits voice. It’s demonstrated by a clip of the AI-generated Waits bellowing a semi-plausible version of Lil Nas X’s country-rap hit “Old Town Road”. But I can’t make the model work. So my next port of call is a website to do it for me.

Voicify.ai creates voices for users. It was set up by Aditya Bansal, a computer science student at Southampton University. He noticed AI cover songs mushrooming and within a week had his website up and running. Speed is of the essence in a gold rush.

“Because the tech is quite new, there’s a lot of people working on it and trying to get a product out, so I had to do it quickly,” the 20-year-old says by video call. He has made an AI voice for himself, in the style of the deceased American rapper Juice Wrld, “but my singing voice isn’t good so I can’t reach the notes.” (As I will learn, a degree of musical talent is needed in the world of AI-generated songcraft.)

When we speak, Bansal is a week away from second-year exams for which he hasn’t yet started revising. With payment tiers ranging from £8.99 to £89.99, Voicify.ai is proving a lucrative distraction. “It started off pretty much US/UK,” he says of its users. “Now I’ve seen it go worldwide.” Record labels have also contacted him, wanting to make models of their artists for demo tracks, which are used as sketches before the full recording process.

He won’t put an exact figure on his earnings but his laugh carries a disbelieving note when I ask. “It’s a lot,” he says, with a smile shading from bashful to gleeful.

To create my voice, I go to another site to extract a cappella sound files of Waits singing tracks from his album Rain Dogs, which I then feed into Voicify.ai. Several hours later, my AI Waits is ready. I test it with Abba’s “Dancing Queen”, an MP3 of which I drag-and-drop into the website.

The song re-emerges with the Abba vocals replaced by the AI-generated Waits voice. It starts in a rather wobbly way, as if the Waits-bot is flummoxed by the assignment. But by the time it reaches “Friday night and the lights are low”, it’s bellowing away with full-throated commitment. It really does sound like Tom Waits covering Abba. Next comes the trickier hurdle of making a new song.

One possible obstacle is the law. In 1990, Waits won a landmark court case in the US against Frito-Lay, manufacturers of Doritos corn chips, for using a gruff-voiced impersonator in an advertisement. Could the same apply to AI vocal clones? The Recording Industry Association of America argues that algorithmic voice training infringes on artists’ copyright as it involves their recordings, like my use of Rain Dogs’ songs. But that can be countered by fair use arguments that protect parodies and imitations.

“If we do get a court case, it will come to whether you’re trying to make money from it, or is it a viral parody that you’re doing for legitimate purposes?” reckons Dr Luke McDonagh of the London School of Economics, an expert on intellectual property rights and the arts. “If you’re doing it to make money, then the law will stop you because you’re essentially free-riding on the brand image, the voice of someone else’s personality. It will be caught by the law in some way, but it’s not necessarily a matter for copyright.”

Alas — but perhaps happily from the point of view of legal fees — my AI Waits impression will not trigger a definitive voice-clone update of Waits vs Frito-Lay. The reason lies not in the dense thickets of jurisprudence, but rather the woefulness of my attempted AI-assisted mimicry.

To get lyrics I go to ChatGPT, the AI chatbot released last November by research laboratory OpenAI. It responds to my query for a song in the style of Tom Waits with a game but facepalmy number called “Gritty Troubadour’s Backstreet”.

“The piano keys are worn and weary,/As he pounds them with a weathered hand,/The smoke curls ’round his whiskey glass,/A prophet of a forgotten land,” runs a verse. This clunky pastiche, produced with incredible speed from analysing Waitsian lyrical matter contained on the internet, conforms to the grand weepie side of the singer’s oeuvre.

For the tune, I turn to Boomy, an AI music creator. Since launching in California in 2019, it claims to have generated more than 15mn songs, which it calculates as 14 per cent of the world’s recorded music. Earlier this month, Spotify was reported to have purged tens of thousands of Boomy-made songs from its catalogue following accusations about bots swarming the site to artificially boost streaming numbers.

My additions to Boomy’s immense pile of songs are undistinguished. To create a track, you pick a style, such as “lo-fi” or “global groove”, and then set basic parameters, like the drum sound and tempo. There isn’t an option to select the style of a named artist. After fiddling with it to make the music as jazzy as possible, I end up with an odd beat-driven thing with a twangy bass.

There’s a button for adding vocals. To my mortification, I find myself hollering “Gritty Troubadour’s Backstreet” in my gruffest voice over the weird Boomy music at my computer. Then it’s back to Voicify.ai to Waits-ify the song. The results are a monstrosity. My Waits voice sounds like a hoarse English numpty enunciating doggerel. My experiment with AI voice generation has been undone by a human flaw: I can’t sing.

You need musical skill to make an AI song. The voice clones require a real person to sing the tune or rap the words. When a UK rock band called Breezer released an imaginary Oasis album last month under the name “Aisis”, they used a voice clone to copy Liam Gallagher but wrote and performed the songs themselves. “I sound mega,” the real Gallagher tweeted after hearing it.

Artists are divided. Electronic musician Grimes, a committed technologist, is creating her own voice-mimicking software for fans to use provided they split royalty earnings with her. In contrast, Sting recently issued an old-guard warning about the “battle” to defend “our human capital against AI”. After a vocal double imitated him covering a song by female rapper Ice Spice, Drake wrote on Instagram, with masculine pique: “This the final straw AI”.

“People are right to be concerned,” Holly Herndon states. The Berlin-based US electronic musician is an innovative figure in computer music who used a custom-made AI recording system for her 2019 album Proto. Her most recent recording is a charmingly mellifluous duet with a digital twin, Holly+, in which they cover Dolly Parton’s tale of obsessive romantic rivalry, “Jolene”.

Holly+’s voice was cloned from recordings of Herndon singing and speaking. “The first time I heard my husband [artist and musician Mat Dryhurst] sing through my voice in real time, which was always our goal, was very striking and memorable,” she says by email. The cloned voice has been made available for public use, though not as a free-for-all: a “clear protocol of attribution”, in Herndon’s words, regulates usage. “I think being permissive with the voice in my circumstance makes the most sense, because there is no way to put this technology back in the box,” she explains.

Almost every stage of technological development in the history of recorded music has been accompanied by dire forecasts of doom. The rise of radio in the 1920s provoked anxiety about live music being undermined. The spread of drum machines in the 1980s was nervously observed by drummers, who feared landing with a tinny and terminal thump on the scrap heap. In neither case were these predictions proved correct.

“Drumming is still thriving,” Herndon says. “Some artists became virtuosic with drum machines, synths and samplers, and we pay attention to the people who can do things with them that are expressive or impressive in ways that are hard for anyone to achieve. The same will be true for AI tools.”

Pop music is the medium that has lavished the most imaginative resources on the sound of the voice over the past century. Since the adoption of electric microphones in recording studios in 1925, singers have been treated as the focal point in records, like Hollywood stars in close-up on the screen. Their vocals are designed to get inside our heads. Yet famous singers are also far away, secreted behind their barrier of celebrity. Intimacy is united with inaccessibility.

That’s why pop stars command huge social media followings. It’s also why their fans are currently running amok with AI voice-generating technology. The ability to make your idol sing or speak takes pop’s illusion of closeness to the logical next level. But the possessors of the world’s most famous voices can take comfort. For all AI’s deepfakery, the missing ingredient in any successful act of mimicry remains good old-fashioned talent — at least for now.

Ludovic Hunter-Tilney is the FT’s pop critic

Find out about our latest stories first — follow @ftweekend on Twitter

Comments